A managed DuckDB warehouse that ingests your largest CSV and Excel datasets into PostgreSQL and runs retrieval-augmented generation on top of every row. Fully hosted, starting at $120/month.

Stream multi-gigabyte CSV and Excel files straight into Postgres without writing a single ETL script.

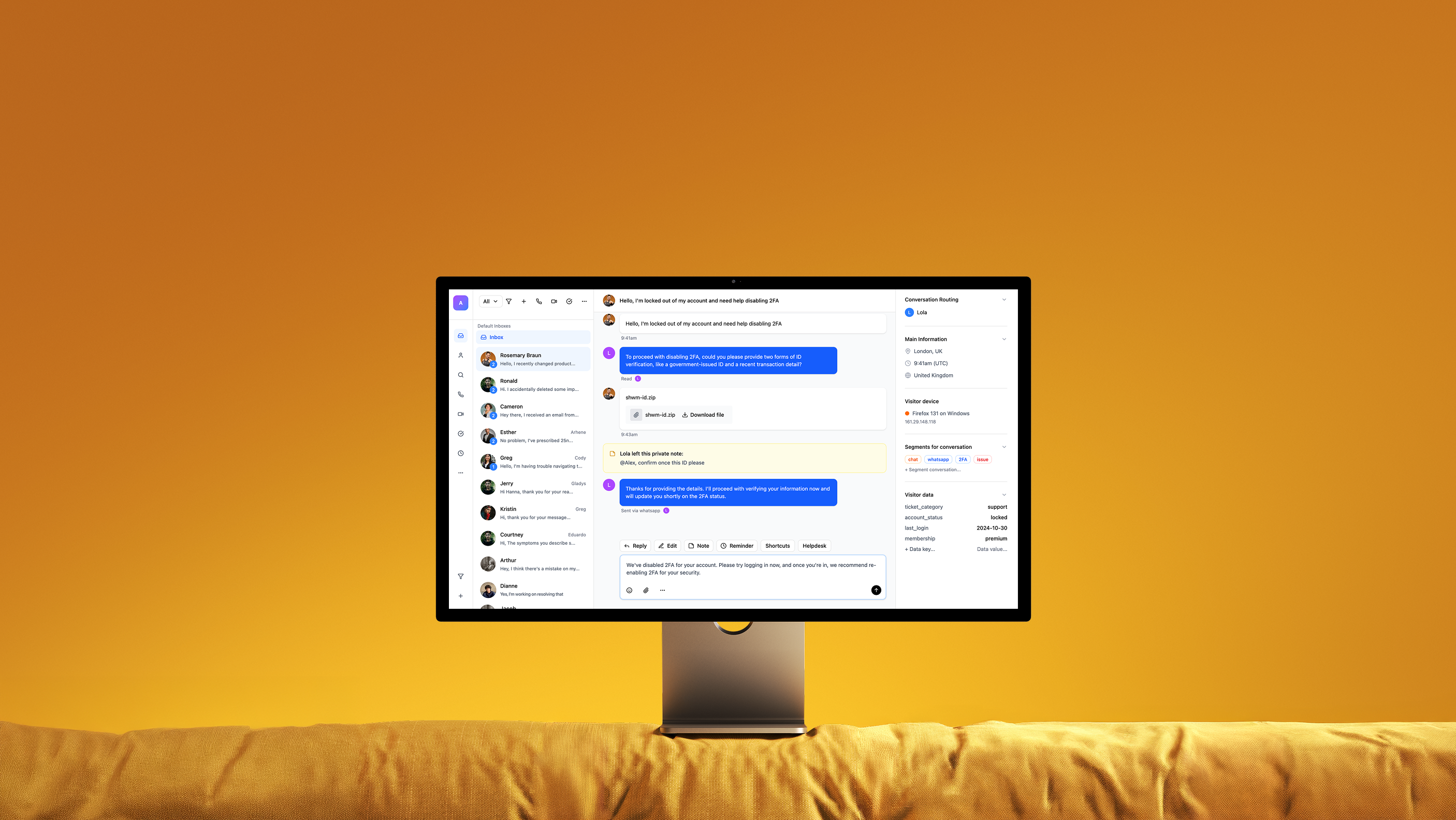

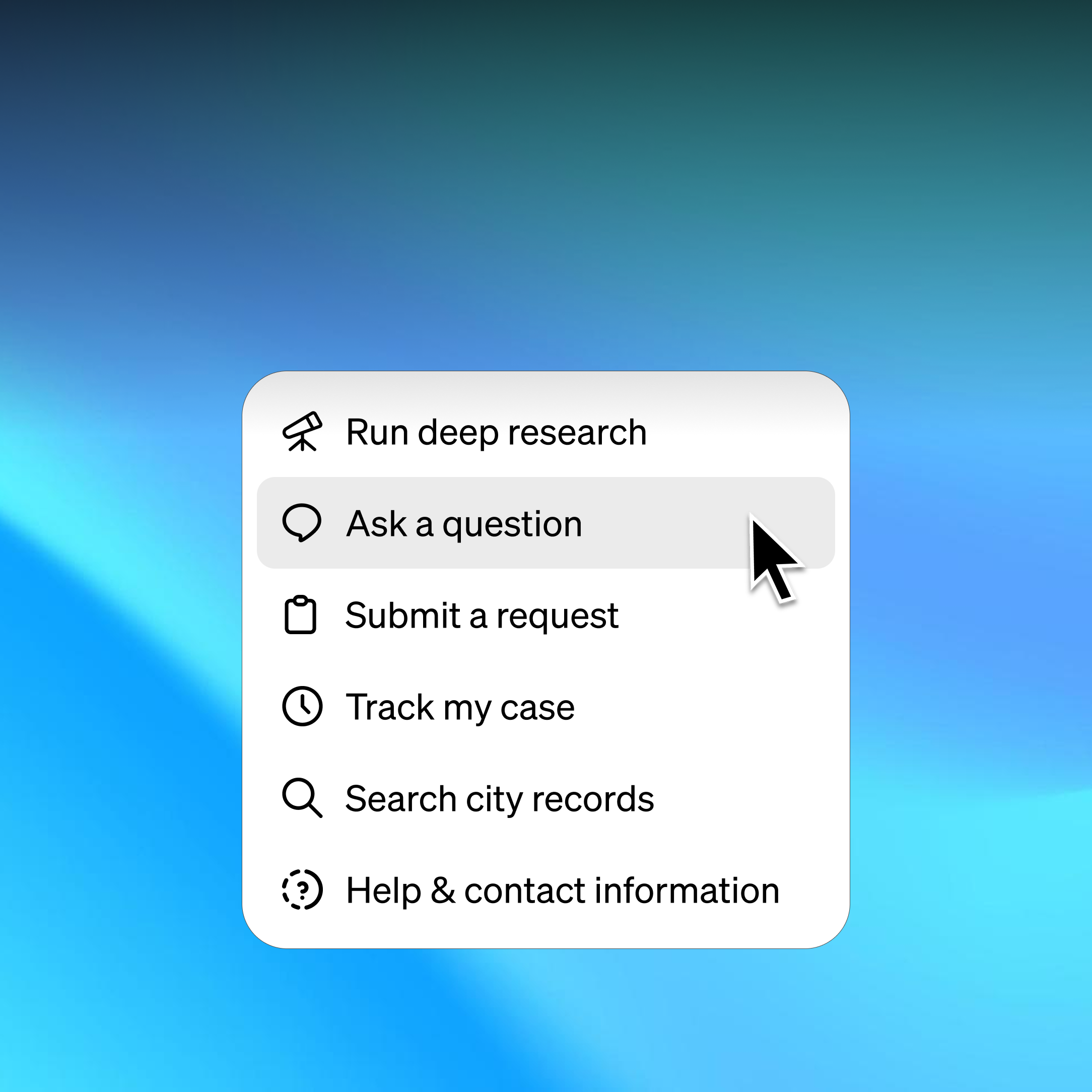

Ask questions in plain English and get answers grounded in your rows, with citations to the exact records.

We run DuckDB, Postgres, and the embedding pipeline. You upload — we handle scaling, backups, and tuning.

Drop in a CSV or Excel file and our pipeline ingests, normalizes, and indexes every row into PostgreSQL. Query it through chat, raw SQL, or your own application — all from a single managed workspace.

Every answer cites the exact rows and tables it pulled from.

Your spreadsheets land in a real database you can query or export.

Multi-gigabyte CSV and Excel files with messy schemas — we normalize them all.

Speak with our team to explore the use cases and benefits of our AI-native platform.